Falconer vs Glean: which is better in April 2026?

When your senior engineers spend half their day answering questions about code they wrote three sprints ago, you start looking at tools like Glean and Falconer. The Falconer vs Glean comparison really comes down to one thing: do you need help finding information, or do you need help keeping that information from rotting? Better search won’t fix docs that were accurate last week and wrong today.

TLDR:

- Falconer auto-updates docs when code changes, while Glean only searches existing content

- Falcon AI agent feeds accurate company context directly to Cursor and Claude Code via MCP

- Glean contracts start at $60K annually plus dedicated FTE for management and maintenance

- Falconer is built for engineering teams where documentation staleness is the core problem

- Falconer is a self-updating knowledge system that keeps documentation synced with your codebase

What is Glean?

Glean is an enterprise search product built around the idea that finding information at work shouldn’t require knowing exactly where to look. It indexes content across workplace apps like Google Drive, Slack, Confluence, Jira, and dozens of others, using a knowledge graph to rank results based on document relationships and user behavior patterns.

The search experience is permission-aware, meaning employees only see results they’re actually authorized to view. On top of that, Glean offers an AI assistant that can summarize documents and surface answers pulled from indexed sources instead of returning a list of links.

What Glean does well

Glean’s core value is retrieval. It connects to your tools, indexes what’s there, and helps people find things faster.

Where it falls short

Glean doesn’t maintain what it finds. If the source material is stale, Glean surfaces stale answers, quickly.

What is Falconer?

Falconer was built to solve a problem every engineering team eventually hits: documentation that’s accurate on day one and wrong by day ten. Not because anyone was careless, but because codebases move fast and docs don’t.

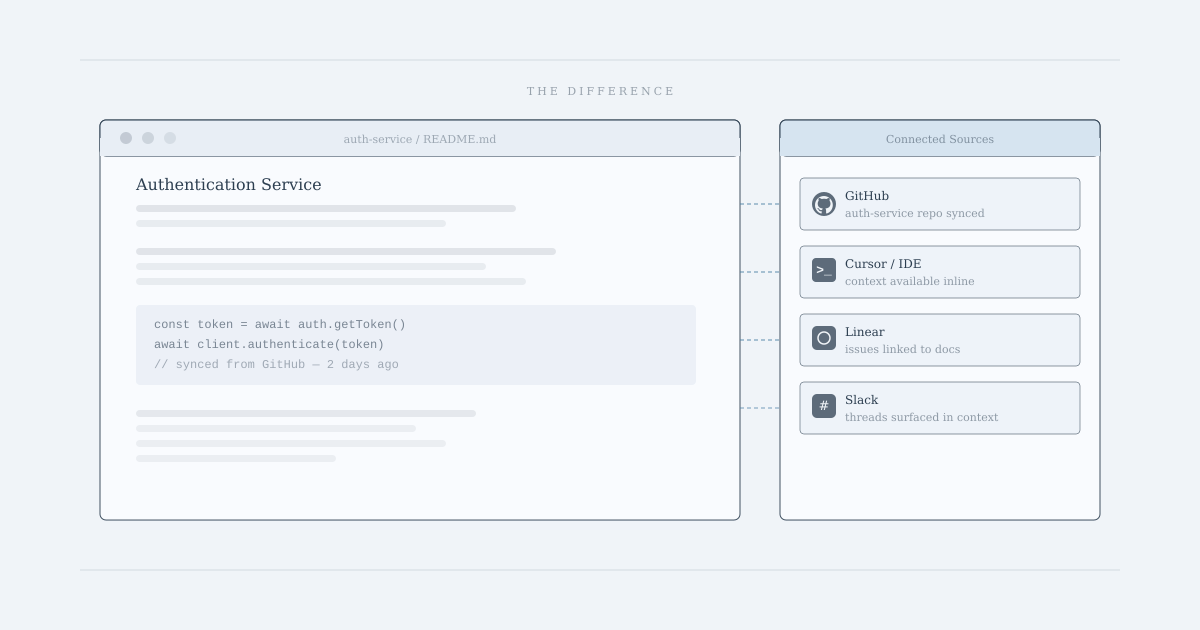

Falconer connects to your existing tools (GitHub, Slack, Linear, and Granola) and builds a living knowledge graph from all of it. When code changes, Falconer flags and updates affected docs. Pull requests and Slack threads can trigger documentation updates directly, without anyone manually tracking what changed.

What makes it different

The core distinction from a tool like Glean is that Falconer writes and maintains, instead of only retrieving. The built-in AI editor generates documents grounded in your actual codebase and company context, not generic LLM output. Search surfaces real answers across code, tasks, and docs in one place.

There’s also Falcon, the embedded AI agent, accessible from the editor, Slack, or via MCP inside tools like Cursor and Claude Code. It can answer questions, write docs, and feed accurate context directly to your coding agents.

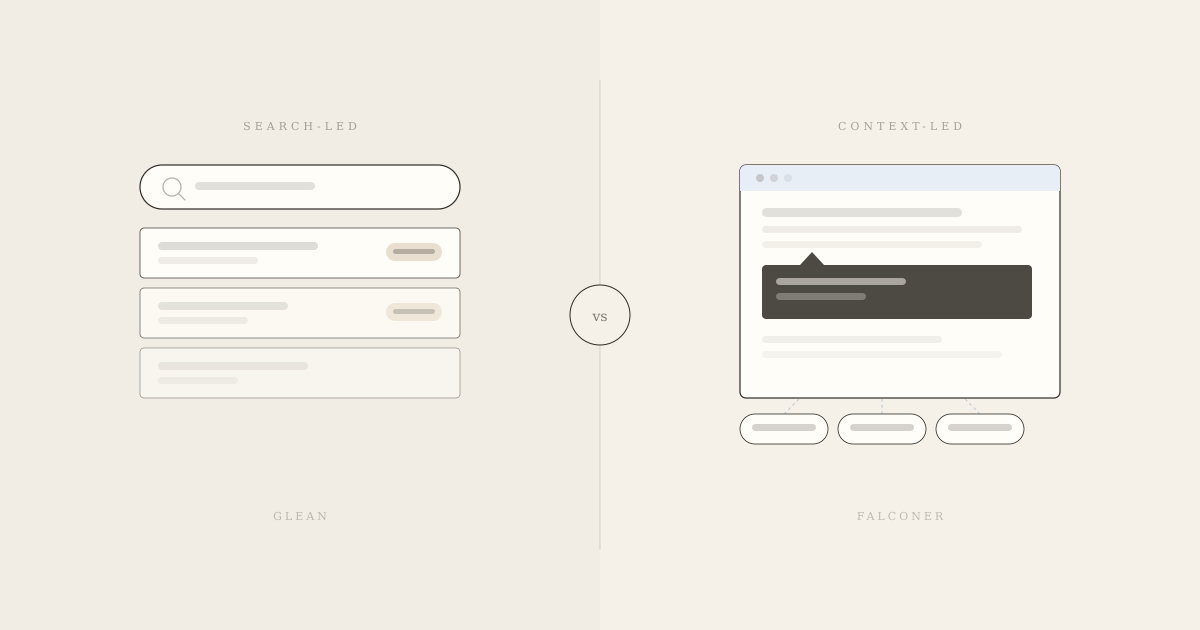

Self-updating documentation vs static search

The gap between these two products is sharpest here, and it matters most to teams shipping code daily.

Glean is built for discoverability. If your docs are accurate and well-organized, Glean helps people find them faster. That’s a real value add. But Glean searches what exists. It doesn’t watch your codebase for changes, flag outdated content, or rewrite anything when an API changes or a feature ships. If the source material is wrong, Glean retrieves wrong answers confidently.

This is where the static search model hits its ceiling. Docs go stale not because people are negligent, but because code moves faster than anyone can track. Glean’s architecture assumes someone else is handling maintenance. In practice, no one is.

Falconer treats documentation as something that needs to stay alive, beyond being findable. When a pull request merges, Falconer flags the docs it affects. Slack threads tied to a change can trigger updates directly. Nothing falls through the cracks because the system is watching the source of change, not waiting for a human to notice.

“Knowledge is always moving. New knowledge is created daily in meetings, Slack conversations, new code pushes. Organizations need systems that can keep up with them.”

For teams where documentation staleness is the actual problem, beyond discoverability alone, this distinction is everything. Glean is a great retrieval layer for stable, well-maintained content. Falconer is for organizations where the content itself cannot afford to be wrong.

| Feature | Falconer | Glean |

|---|---|---|

| Core purpose | Self-updating knowledge system that maintains documentation accuracy as code changes | Enterprise search tool that indexes and retrieves existing content across workplace apps |

| Documentation approach | Actively writes, updates, and maintains docs when code changes through pull request monitoring and Slack integration | Searches existing documentation but does not create or update content |

| AI agent integration | Falcon AI agent feeds accurate company context directly to Cursor and Claude Code via MCP integration | Workplace automation agents for general office workflows using natural language instructions |

| Staleness prevention | Automatically flags and updates affected docs when pull requests merge or code changes occur | No staleness detection or automatic updates; surfaces whatever content exists in connected sources |

| Setup complexity | Connects to existing tools in minutes with cloud, VPC, or on-premise deployment options | Requires dedicated FTE for management, extensive onboarding, and indexing strategy configuration |

| Pricing structure | Predictable costs with flexible deployment options and focus on engineering team integrations | Starts around $50 per user per month with $60,000 annual minimums, plus potential $10,000+ monthly cloud hosting costs |

| Best for | Engineering teams where documentation goes stale faster than manual updates can keep pace | Large enterprises with stable, well-maintained knowledge bases needing better discoverability |

AI agent integration and context quality

Both products have moved into AI agent territory, but with very different goals in mind.

Glean’s agent expansion in 2025 lets employees build and deploy agents for general office automation using natural language instructions. It’s a reasonable extension of the search product. But those agents are designed for workplace workflows, not for feeding context to the coding tools where engineers actually spend their time.

How Falconer approaches agent context

Falconer was designed from the start as a context layer for both humans and AI systems. Through MCP integration, Falcon connects directly to tools like Cursor and Claude Code, so when your coding agent asks a question, the answer comes from your actual codebase and company knowledge, not from a generic LLM guess.

This matters more than it sounds. Agents amplify whatever context they receive. Feed a coding agent vague or outdated information, and it generates code that confidently misses the mark. Feed it accurate, up-to-date company knowledge, and it becomes genuinely useful.

That’s the idea behind context sovereignty: keeping critical knowledge controlled and reusable, owned by your team, instead of scattered across one-off prompts. If you’re building AI-augmented engineering workflows, the quality of agent outputs depends entirely on the quality of context flowing in. Falconer manages that layer. Glean doesn’t.

Search architecture and knowledge philosophy

Glean’s architecture is built around a straightforward premise: information exists somewhere, and people need help finding it. Connect your tools, index the content, and surface it quickly. For organizations with stable, well-maintained knowledge bases, that’s a genuinely useful approach.

The assumption underneath it, though, is that someone else keeps the content accurate. Glean doesn’t question whether what it finds is still true.

Falconer starts from the opposite premise. Knowledge decays continuously, and better search just helps you find outdated information faster. The knowledge graph Falconer builds isn’t static. It updates as your codebase evolves, then pushes those changes to the people they affect.

The core philosophical split

This difference matters more than it might seem at first glance.

- Glean removes information silos by making existing content easier to find across tools.

- Falconer works to prevent knowledge rot by treating accuracy as an ongoing process, not a one-time indexing event.

If your actual problem is that people can’t find things, Glean fits. If your actual problem is that what they find is wrong, a search layer won’t solve it.

Setup complexity and running costs

Glean’s pricing reflects its enterprise positioning. Contracts often start around $50 per user per month, with minimum commitments around $60,000 annually. Larger deployments can reach $200,000 to $240,000 per year. Mid-sized organizations can also face cloud hosting costs exceeding $10,000 monthly, and many teams find themselves allocating a dedicated internal FTE just to manage onboarding, indexing strategies, and system performance.

That’s a real investment before anyone has written a single document.

Falconer connects to your existing tools in minutes. Cloud, VPC, or on-premise deployment options mean you’re not locked into one infrastructure path. SOC 2 Type II certification, AES-256 encryption, granular access controls, and BYOK for enterprise customers cover the security requirements without added overhead.

The scope difference matters too. Glean attempts to index every workplace app. Falconer focuses on the integrations engineering teams actually rely on, which keeps setup lean and ongoing costs predictable.

Why Falconer is the better choice

Glean earns its place in large enterprises with mature, stable knowledge bases. If the core problem is search across well-maintained content, it does that well.

But for engineering teams where docs can’t keep pace with the codebase, that’s a different problem entirely. Better search just helps you find stale answers faster.

Falconer fits if any of these describe your team:

- Your best engineers spend hours answering repeated Slack questions instead of building

- You’re building AI-augmented workflows and need reliable context for coding agents

- Documentation goes out of date the moment it’s written, and no one has time to fix it

The self-updating architecture keeps knowledge current as you ship. Context sovereignty means your AI tools work from accurate information, not guesswork. And documentation debt stops accumulating.

If that’s the problem you’re solving, Falconer is built for it.

Final thoughts on documentation that stays current

Better search doesn’t fix outdated docs. Falconer approaches knowledge differently by treating accuracy as an ongoing process, not a one-time indexing event. Your docs update when code changes, your AI tools get reliable context, and your team stops answering the same Slack questions every week. Get started and see what self-updating documentation does for your workflow.

FAQ

How do I decide between Falconer and Glean for my team?

If your main challenge is finding stable, well-maintained content across many workplace apps, Glean’s search strength makes sense. If your docs can’t keep pace with your codebase and accuracy matters more than discoverability, Falconer’s self-updating architecture solves the root problem.

What’s the core difference between Falconer’s and Glean’s approach to documentation?

Glean retrieves and surfaces existing content across your tools but doesn’t maintain it. Falconer automatically flags and updates docs when your code changes, treating documentation as something that needs to stay accurate, beyond being findable.

Who is Falconer best suited for?

Techincal teams shipping code daily where documentation goes stale faster than anyone can manually update it, and teams building AI-augmented workflows that need reliable company context feeding their coding agents. Setup takes minutes, and the system watches your codebase for changes automatically.

Can Falconer work with the coding tools my team already uses?

Yes. Through MCP integration, Falcon connects directly to tools like Cursor and Claude Code, feeding accurate context from your actual codebase to your coding agents in real time. This keeps AI outputs grounded in your company’s knowledge instead of generic LLM responses.

What should I know about the costs between these tools?

Glean contracts often start around $50 per user per month with minimums around $60,000 annually, plus potential cloud hosting costs exceeding $10,000 monthly and a dedicated FTE for management. Falconer connects to your existing tools in minutes with predictable costs and flexible deployment options (cloud, VPC, or on-premise).